How Mastery Learning Algorithms Can Support Adaptive Math Learning in the Classroom

- Derek Lomas

- Jul 15, 2021

- 5 min read

Updated: Dec 5, 2021

Artificial Intelligence can seem like magic when it accomplishes something impressive without being fully understood. In this short article, I make the case that adaptive learning in the classroom will actually work better when users understand what the algorithms are doing.

There are many different models for adaptive learning, which I describe at the end of the article. An adaptive learning system is composed of several important elements: first, the content, like assessment questions and instructional resources (like videos, images, instructions, etc); second, the data, like the databases describing the properties of the content (type, topic, difficulty, etc ), students (past program performance and experience, and estimated ability levels), third, the algorithm, the automated processes that govern a student's progression, and, finally the user experience, which is where the real magic happens. A well-designed product with weak adaptive learning will probably have a better impact than a poor product with great adaptivity. A great product includes support beyond the screen, for instance, curriculum for use by classroom teachers. This article describes curriculum-based adaptive learning algorithms that are specifically designed to be easy to understand by teachers and students in the classroom.

Sidebar: The Magic Decoded

There are many different "adaptive learning" styles out there. Here is a summary:

Bayesian Knowledge Tracing (BKT): This adaptivity relies on items carefully tagged for various knowledge components. As a student answers items correctly or incorrectly, a prediction is maintained of whether they would get the next item correct. As the student progresses, questions from mastered skills are removed. This allows students to progress faster through the curriculum.

Performance Factors Analysis (PFA): In this approach, a BKT model is modified so that the previously successful items are repeated regularly in the future. This is best for paired associates for memorization, as it supports the spaced practice.

Item Response Theory Adaptivity (IRT): Item response statistics are used to measure the difficulty of items and the ability of students on the same scale. This allows an adaptive test to target a particular success rate (50% success brings the most information, but puts students in tears). This is mostly used in adaptive assessments.

Knowledge Space Theory (KST): In this approach, the knowledge similarity between items is statistically modeled. This means that items don’t need to be carefully tagged. As students answer more questions, the model updates its predictions about the degree of mastery the student has achieved in different areas. The algorithm then typically uses difficulty targeting like IRT (e.g., target 85% correct).

Linear Neuropsychological Approaches: These approaches come from training primates in brain plasticity studies. Items are divided into many small difficulty levels, and users advance based on progression rules. For instance, the learner might need to get at least 3 in a row correct to go up a level of difficulty but will go down a level of difficulty when they get one wrong.

Handcrafted Adaptivity: Production rules, or if-then statements, that simply control the flow of the program based on students’ performance. For example: “If you fail at this item, watch this related video”.

All of the above approaches have been implemented successfully in the design of educational products. But it should be clear that the educational design of the product matters a lot more than the algorithm. Using any of the above algorithms will only support a small portion of the efficacy of the learning system. The quality of assessments, quality of interventions, and overall product model can be much more influential on student outcomes than the algorithm itself.

'Mastery Learning Algorithms' are based on Benjamin Bloom’s 1968 model of Mastery Learning. The core idea is simple: following instruction, learners should be assessed. If mastery is achieved, learners should move to the next topic. If mastery isn’t achieved, teaching should continue until it is. This model sounds great, but it is difficult to practice in real classrooms with 20 students all at different levels. Our goal was to design an adaptive learning algorithm that could help teachers implement this pedagogical model in blended classroom settings, online or offline.

The Mastery Learning Algorithm is based on Bloom’s 1968 model of mastery learning

Measure what you treasure: Adaptive learning algorithms are designed to improve student outcomes. But, the algorithms aren't necessarily designed to measure whether the actions it takes are effective. This is important: just because a learning system is adaptive doesn’t mean it will actually produce better outcomes.

To address this, the Mastery Learning Algorithm is designed to measure its own results: after each teaching cycle, the algorithm assesses whether a student learned. Peter Norvig, the director of research at Google, defines intelligence as “the ability to select an action that is expected to maximize a performance measure.” The Mastery Learning Algorithm is intelligent, but not because it uses machine learning or neural networks — but because it is designed to measure whether it is working effectively. This algorithm supports embedded A/B tests (controlled experiments) that can reveal those learning resources that work best for each student.

A Simplified Flow Chart of the Mastery Learning Algorithm

Now, some might be wondering: where are the deep learning neural networks or machine learning algorithms? It's true that the Mastery Learning Algorithm can make use of machine learning in several places; for instance, to predict the difficulty of questions for each user. But machine learning is in the background of the algorithm, it is not the main event. The core design of the algorithm isn’t a machine learning model—it is a pedagogical model.

Designed for curriculum-based learning: The Mastery Learning Algorithm is designed to work well with teachers—and the messiness inherent to real-world classrooms. In a normal classroom,the algorithm won’t know much about individual students because it is rare that a student will use the adaptive system on a daily basis. While students can use the Mastery algorithm without teacher input, it is designed to let teachers decide the focus of each adaptive session: after all, typically teachers want students to practice the topics that the teacher just taught!

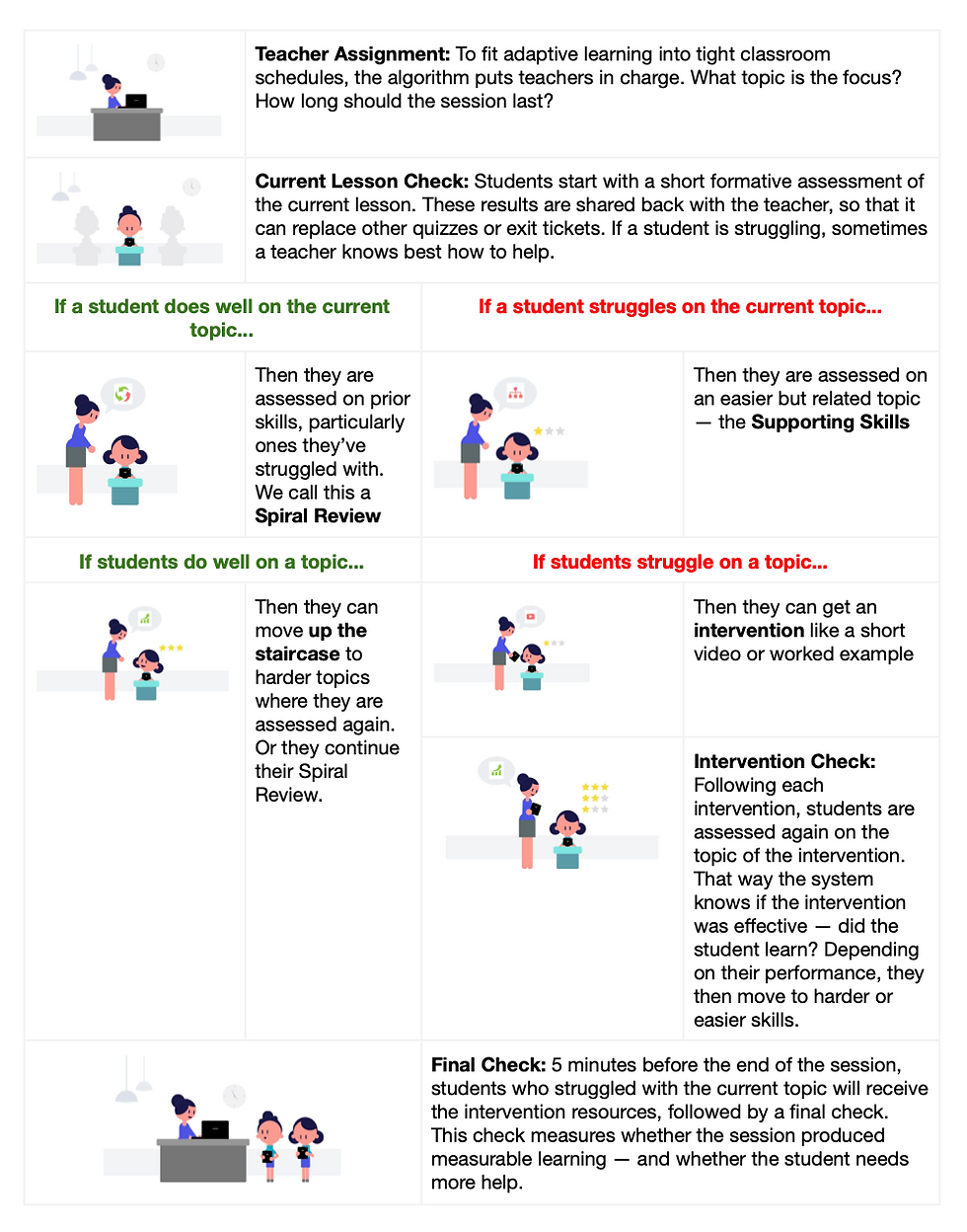

A Quick Overview of a Mastery Learning Algorithm Adaptive Session: When a student begins to use the adaptive system, the algorithm gives them a short assessment of the topic assigned by the teacher (which is typically what they just learned in class). That way, teachers can make use of the adaptive system as a reliable formative assessment or exit ticket. If a student does well on the current lesson, the algorithm uses a “Spiral Assessment” to test how the student does on past topics. But, if the student struggles on the current lesson topic, the algorithm evaluates precursor skills, which are the easier skills that may support understanding in the current lesson. On weak precursor skills, students receive automated intervention resources (like instructional videos) followed by short quizzes—quizzes which measure whether the resource was successful in helping the student. Before the session ends, the algorithm provides a final check to see if the student shows improvement on the current lesson. All of this data is provided back to the teacher to help them help the student.

The below chart breaks down the Mastery Learning Algorithm into discrete pieces:

Visual Example

Below, we show a particular example of an experience in the mastery algorithm. In this case, the student failed the initial check of the current lesson (Skill C). The algorithm then assigned the student to a precursor or supporting skill (Skill B), as they didn’t do well on that either, an intervention was triggered and the student watches a video about skill B. Following the video, the student takes a short quiz. As the teacher set this session to end before 15 minutes, the algorithm determines that the student should return to the video for the current day’s lesson, Skill C. After watching that, the student takes a short posttest.

An example session in the mastery algorithm

Conclusion

In a real magic show, knowing the trick ruins the magic. But that’s precisely why adaptive learning isn’t a magic show: knowing how adaptive learning works can help make it work even better.

While playing Drift Boss, you quickly learn that reacting too late is just as bad as reacting too early.